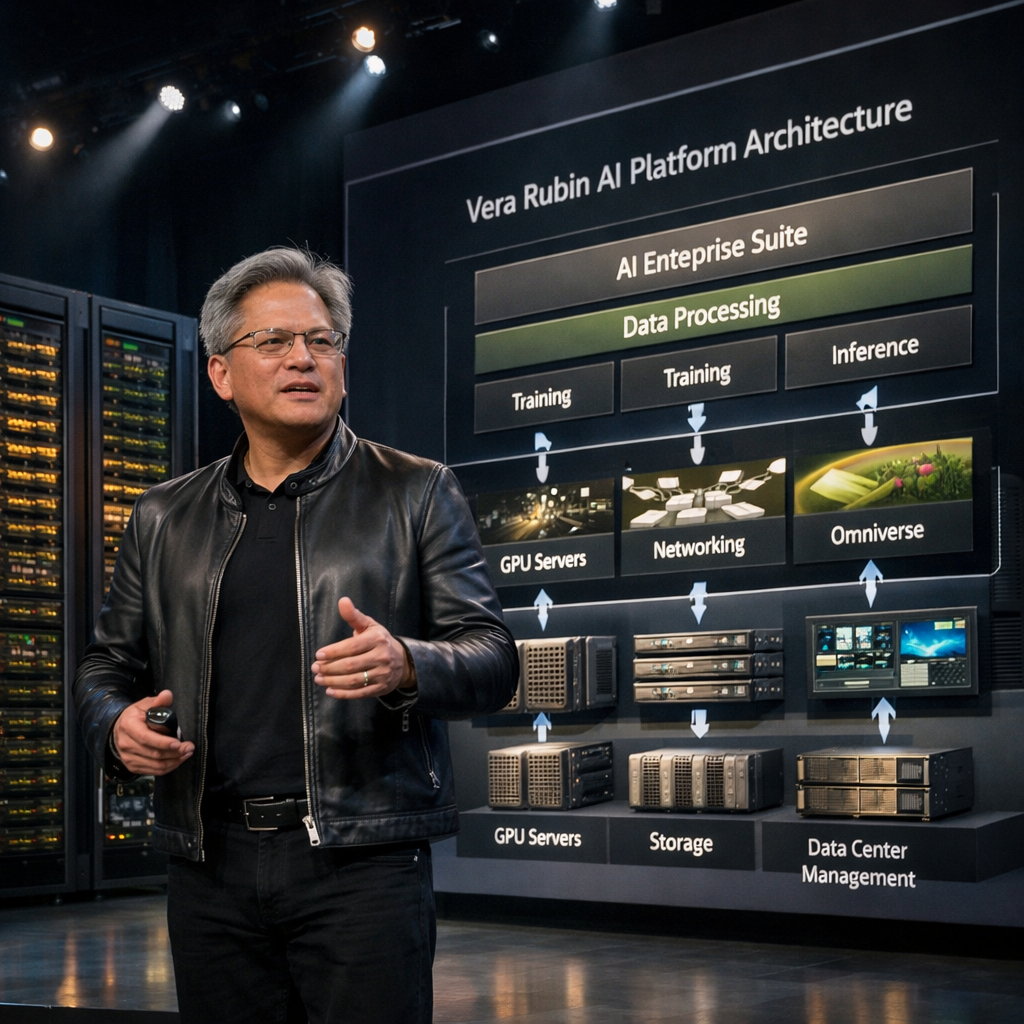

NVIDIA unveiled a new generation of AI computing infrastructure on 16 March 2026, introducing the Vera Rubin platform, a high-performance system architecture designed to power the world’s largest artificial intelligence factories and enterprise AI deployments.

The announcement was made during NVIDIA GTC 2026 in San Jose, where the company confirmed that seven new chips within the Vera Rubin platform have entered full production, marking a significant expansion of commercial AI infrastructure available to enterprises and cloud providers.

A New Infrastructure Layer for AI Factories

The Vera Rubin platform is engineered as a modular AI computing stack combining GPUs, CPUs, networking, storage, and inference accelerators into integrated racks capable of running large-scale AI workloads.

Key components include:

- Vera Rubin NVL72 GPU racks

- Vera CPU racks

- NVIDIA Groq 3 LPX inference accelerator racks

- BlueField-4 STX storage infrastructure

- Spectrum-6 SPX Ethernet networking systems

Together, these systems are designed to support the entire lifecycle of artificial intelligence workloads, from model training and post-training optimization to large-scale AI inference and autonomous agent systems.

Scaling the Next Generation of Enterprise AI

The platform is specifically built to support what NVIDIA describes as “AI factories” — large computing environments where organizations continuously train, deploy, and operate AI models.

By integrating high-density compute racks with networking and storage optimized for AI data pipelines, the system enables companies to process vast datasets, train advanced models faster, and deploy real-time AI services at scale.

This architecture also supports emerging agentic AI systems, where AI agents perform complex autonomous tasks such as software development, decision-making, and workflow orchestration.

A Global Ecosystem of Deployments

The Vera Rubin platform is expected to be deployed by cloud service providers, enterprise AI platforms, and large-scale computing facilities, forming a new generation of AI infrastructure capable of supporting industrial-scale machine intelligence.

The platform’s design allows organizations to configure infrastructure for different stages of AI workloads — including pre-training, fine-tuning, and inference operations — enabling companies to scale AI operations without building entirely custom architectures.

Why This Deployment Matters

The launch reflects a broader shift in enterprise technology strategy: AI infrastructure is moving from experimental computing clusters to industrial-scale production systems.

Instead of isolated AI experiments, companies are now building permanent computing environments dedicated to continuous AI development and deployment.

With the Vera Rubin platform entering full production, technology vendors, hyperscale cloud providers, and enterprise operators now have access to a new generation of hardware specifically engineered for AI-native computing at global scale.

stromectol price in turkey

stromectol price in turkey

azithromycin 500 mg dosage

azithromycin 500 mg dosage

diflucan

diflucan

larotid

larotid

furosemide 20 mg oral tablet

furosemide 20 mg oral tablet

flagyl 500 mg tablet

flagyl 500 mg tablet

udenafil 200mg

udenafil 200mg

vibramycin 100mg

vibramycin 100mg

cialis buy online

cialis buy online

tadalafil after prostatectomy

tadalafil after prostatectomy

kamagra pillole recensioni

kamagra pillole recensioni

sildenafil kidney pain

sildenafil kidney pain

cipla loperamide tablet

cipla loperamide tablet

dosis maxima de semaglutida para bajar de peso

dosis maxima de semaglutida para bajar de peso

proscar drug card

proscar drug card